Anyone who has struggled with car windows, door locks, audio settings, and climate controls subject to the latest onboard digital platforms without a manual control in sight will feel some nostalgia for the slogan of Lockheed Skunk Works lead engineer Kelly Johnson – “Keep it simple, stupid” – KISS for short. The mantra applies today far beyond Johnson’s area of concern for aircraft repair teams to be able to fix mechanical problems with limited resources in remote locations. These days you hear it from critics of cumbersome government solutions and over-engineered development programs of all kinds.

The trouble is that it’s bad advice for complex problems. Complex challenges require complex solutions. Simple solutions have limited reach. When confronted with complex problems, development practitioners should look for creative answers instead of hoping for miracles of off-the-shelf simplicity.

Many readers will be tempted to give a counter-example. What’s so complex about Wal-Mart’s strategy of low prices? What about the time we stopped that strange knocking in the middle of the night by tying string around the water heater pipe? What’s wrong with simplifying the tax code?

Though they come from all walks of life, the counter-examples will share one feature. The problems they solve are not really complex. They may be complicated, but not complex. The difference can set you free if you are a creative problem-solver because it will show exactly where your creativity can pay off.

Complicated problems are the ones that are hard to describe. For example, it may be hard to describe all the types of mosquito-borne disease that a health program is supposed to address.

Complex problems are the ones that have outcomes that are hard to describe. For example, the job growth in a region may be highly unpredictable, rising and falling without any clear relationship to other measures of economic activity or social well-being.

Complicated problems can have simple outcomes. The incidence of all the diseases borne by a particular mosquito, for instance, may rise together in unprotected villages during rainy seasons. Perhaps it’s no surprise that well-draped bed-nets can address the problem.

Complex problems, in contrast, may be easy to describe. There’s nothing complicated, for instance, about wanting to increase job growth. But a complex pattern of job-growth outcomes is by definition hard to describe – even as a function of the presence or absence of several factors. And that suggests simple solutions will not suffice.

Why are simple solutions inadequate for complex problems? The idea goes back at least to the 1950s when British cybernetics pioneer Ross Ashby set out his Law of Requisite Variety (LRV). Ashby was interested in controls that regulate the states of a system like the temperature of a house. Ashby’s LRV states that the number of possible states of a control has to be at least as great as the number of possible states of the system that it regulates. If a thermometer bursts on a hot day, for example, its range was too small for its environment.

These days, engineers apply the LRV to any cause and effect and the indicators that measure them. And they have refined the principle to something they can prove with basic information theory: the number (or order of magnitude) of typical results for an indicator measuring a cause has to be at least as great as the number (or order of magnitude) of typical results for an indicator measuring its effect. In today’s shorthand, the information content of a cause must be as great as the information content of its effect.

The LRV can show, for example, when a causal explanation of an outcome is missing something. Suppose we want to explain what drives job growth in a region. And the growth numbers we need to explain bounce around among four distinct results: in some seasons, job growth is so high that few people have free time; in others, it’s high enough to keep up with kids entering the work force; in still others, you see a lot of kids hanging around in the parks without work; and in yet others, unemployment is widespread.

Now let’s say – just to keep the example simple – that an economist has completed a study showing that employment in regions like this one depends almost entirely on small-business credit. The question is whether the economist is right about our region.

So we look at the credit history of the region and find that small-business loans in this region historically show just two distinct levels of growth at different times. Sometimes they grow about 5% per year faster than the economy, and sometimes they decline about 5% per year – but only very rarely anything else.

According to the LRV – and common sense – that’s enough to see that loan growth cannot provide a full explanation of job growth in our region. Loan growth in this region has just two gears – high and low. But job growth has four. Something else must be going on.

Of course, a good causal explanation needs more than information content as rich as what it is supposed to explain. The drivers in a good causal explanation must also have a high impact on what they explain. Together, impact and information content are the hallmark of explanations with high predictive power.

Baseball provides some ready examples of how impact and information content contribute to the power of a causal explanation. In his 2003 account Moneyball of how the Oakland Athletics rode a better statistical understanding of probabilistic causation to the playoffs, Michael Lewis recounts how Billy Beane and his statistical guru realized the key baseball metrics of the day – earned-run averages (ERAs) and batting averages (AVGs) – failed to predict win-loss records.

ERAs lack impact. Teams with widely varying ERAs often have identical win-loss records. AVGs lack information content. Team batting averages are much more tightly clustered – and thus more easily statistically described – than win-loss records. In terms of complexity, batting average results are not rich enough to explain the complex, hard-to-describe reality of major-league teams’ win-loss records.

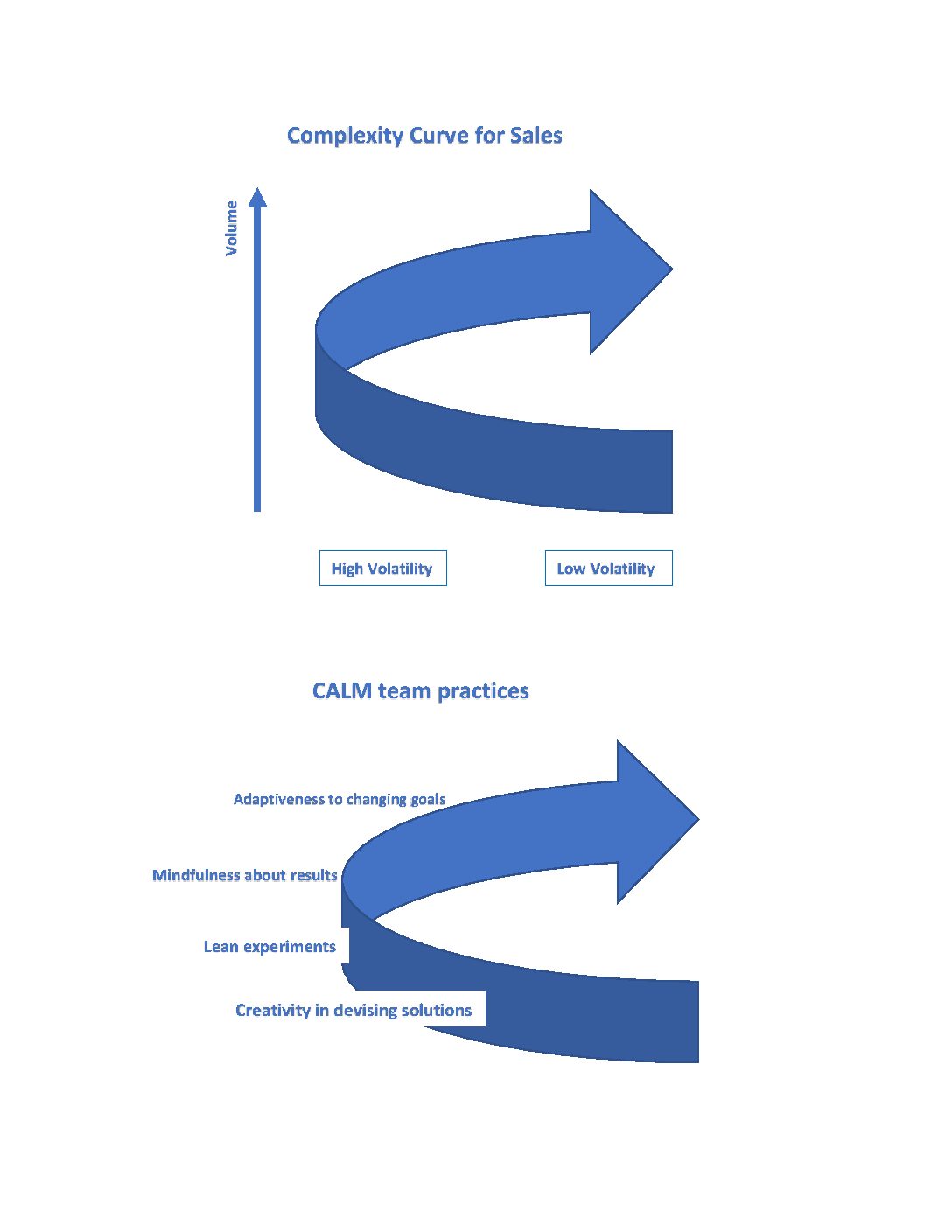

What do complex solutions look like? There are two signs that a problem solver is developing a solution rich enough to explain and control complex outcomes. One is that it’s unique – simple solutions are often widespread while complex ones tend to be customized to specific circumstances.

For example, Yale’s Dean Karlin and the Jameel Poverty Action Lab at MIT found that an antipoverty program of BRAC in Bangladesh had significant impact in raising participants out of poverty past the life of the program. This was remarkable against a background of dozens of studies of simple antipoverty programs that have shown little significant impact when subjected to randomized control trials.

The BRAC program is not simple. It has not one or two but five parts: the provision of livestock for making money; training on how to raise the livestock; food or cash so the family would not be forced to eat the livestock; a savings account; and physical and mental health support. Taken together, this is one of the highest-impact interventions tested so far to raise families permanently out of poverty – a complex solution for a problem that has proven to be surprisingly complex.

The other sign of adequate complexity is that the solution addresses root causes. Why do root causes have high information content or complexity? If an immediate cause has more information content than its effect, and an immediate cause of the first cause has more information content than the first cause, then a root cause of both of those causes and the effect will have the most information content of all.

More intuitively, root causes lie at the beginning of long causal chains. They provide more robust explanations of what eventually happens, like the information in the first chapters of a novel.

Joe Dougherty’s excellent survey of barriers to small-business access to finance “The Elephant in the Room” (innovations 10:1-2) provides an example of a complex problem that has defied solution for decades – and the complex set of factors constraining it that recent research has begun to indict.

Constraining the ability of small businesses to seek finance, for example, are their lack of formal registration, risky operating environments, and management inexperience. Constraining the supply of credit are the domination of financial sectors by depository institutions unsuited to small-business needs, ready investment alternatives to banks in the form of government debt, and limited access to best practices. Environmental constraints include poor contract enforcement, poor credit information, and poor information on collateral liens.

These are root causes of a phenomenon – the scattershot access to credit of small businesses across the developing world – that a generation of previous research has conspicuously failed to explain. Like the BRAC intervention, these root causes are not simple. But they start to point to a solution.

Dougherty goes on to list five design principles for specific solutions that have the potential to transform small-business access to finance in the developing world. They include fully understanding the ecosystem of the particular problem, setting clear conditions for local government support, experimentation, indirect interventions, and being ready to fail. This is not just a prescription of modesty. The list pivots around the central principle of experimentation.

Dougherty is right that experimentation is central to complex problem solving. Because they are tailor-made to address a complex problem in all its richness, particularity, peculiarity, and uniqueness, complex solutions will often miss their target. But because complex solutions are so specific, the failures are always illuminating and point the way to success.

Simple solutions, in contrast, are short on implementation specifics, slop over their edges, and yield mixed, mediocre, and ambiguous results over a wide variety of problems. As a result, the way forward will be unclear. When it comes to complex problems, complex solutions offer a better deal than simple ones: trial, error, and improvement will go farther than ambiguous outcomes leading nowhere.

The prime ingredient of complex problem solving, nevertheless, is creativity. We have solved most of the simple problems. The complex ones remain. It is time to give up the myth of the virtuously simple solution when there is nothing virtuously simple about the problem.

Keeping it simple is a stupid prescription for the problems of today. We must respect complexity, design for the problem at hand, be ready to fail, and experiment until we solve.